Artificial Intelligence (AI) was on everyone’s lips in 2023, but we all have yet to know what’s in store for 2024 and what it entails for animation studios.

Few topics are as divisive as AI. On one hand, you find outraged artists whose artworks are being illegally ingested by algorithms. On the other, a new wave of creators leveraging AI for self-expression or monetary gains.

Whatever your opinion is, we found it essential to give the animation industry an overview of available tools, as well as their practical use cases and how they might affect your job as an animator: not only will this article help you find ways to differentiate yourself from generic AI art, but also how to incorporate it as another tool in your toolset when it’s relevant.

The following list is non-exhaustive but tries to cover all the steps of the production process, from concept art to rendering. Feel free to send us your recommendations!

1. Text generation

The first and most mediatic use of AI is text generation: using artificial intelligence to automatically write text based on an initial prompt―you give it a few words, and it will generate a full text based on what it has learned from a large corpus of data. Large language models can complete a variety of general and specialized tasks for animation studios:

- Scriptwriting ideation - To provide suggestions for scene or character descriptions, generate dialogue, or even propose ideas for plot twists.

- Scene descriptions - Generate detailed scene descriptions to help animators visualize scenes, determine camera angles, and establish the overall mood of an animation.

- Character backstories - By specifying key traits, animators can play with different character nuances to create more well-rounded and compelling personas.

Links and references

2. Image generation

Text isn’t the only format AI can play with. Perhaps the most controversial technology of 2023, image generation models like DALL·E and MidJourney use advanced neural networks to generate images from textual prompts or from another image:

- Concept art - An animation studio can quickly produce a variety of concept art, exploring different design possibilities for a new project from just a script―or at least a textual description.

- Turn shapes into complete illustrations - Midjourney can understand a rough sketch and turn it into a complete illustration.

- Character and environment design - AI-generated images can be used as a starting point for character design or to explore different ideas for environments and layouts, providing a visual reference for animators to build upon.

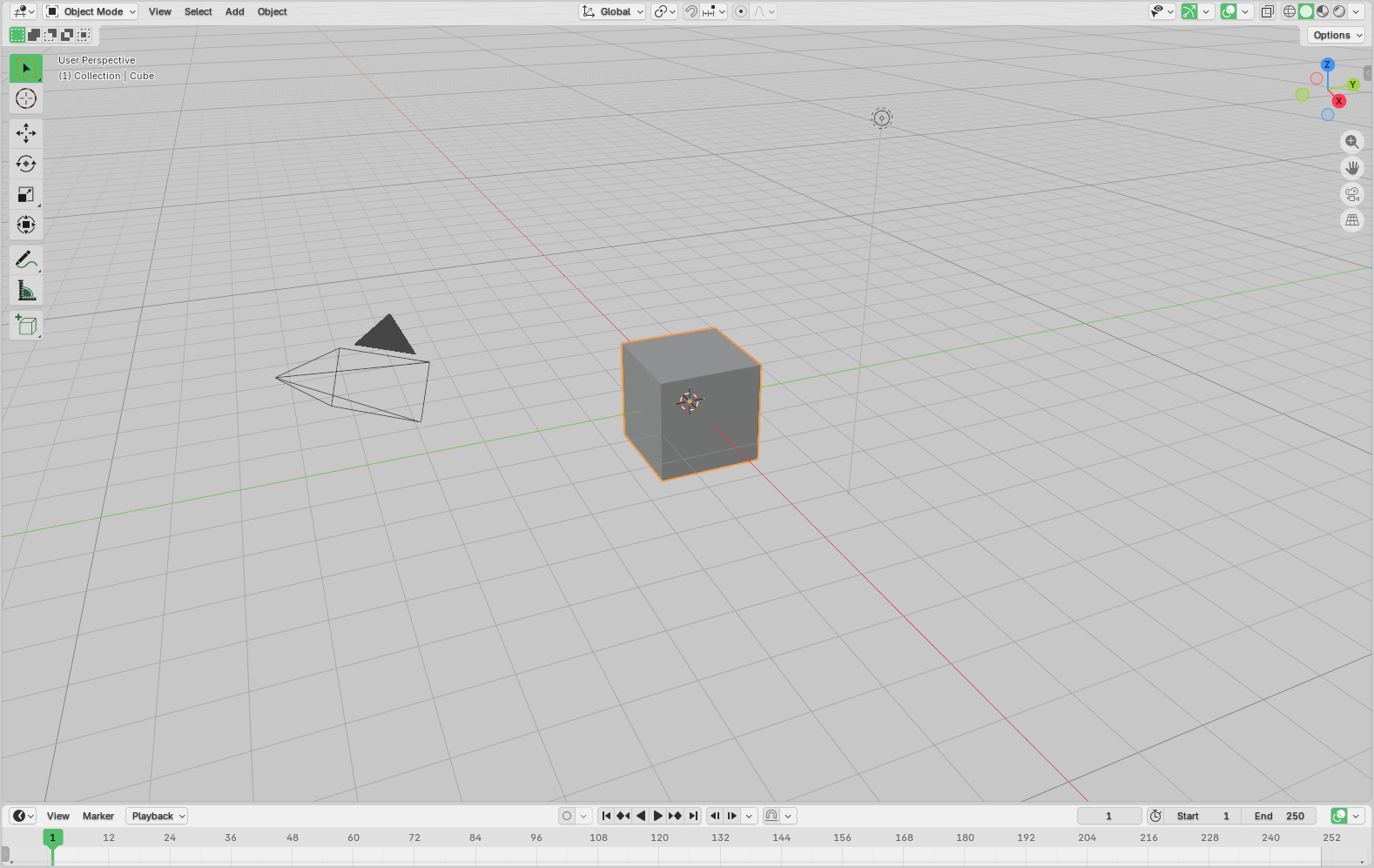

- Texture generation - There are already specialized models like Dream Textures (Blender plugin) for generating textures, which can be used for characters, objects, or environments.

Example of texture generation using Dream Textures (Blender)

Combined with text generation, it’s possible to generate entire concept books with little effort. This is obviously huge for small studios or indie animators wanting to pitch concepts to producers at little to no cost.

Links and references

3. Upscaler

AI upscalers enhance the resolution and quality of images or videos without manual intervention.

- Faster and cheaper rendering - Studios need to meet tight deadlines and deliver content fast, but rendering is often the main bottleneck in the feedback loop: AI upscalers can take low-quality renders and output high-quality previews comparable to regular renders in a fraction of the time. Blender upscaler, for example, can render a similar quality image down from 37 minutes to 5 minutes (86.5% faster):

- Add realism - Upscalers like Photoshop Upscaler or Magnific AI can quickly add details to any render to make it look more detailed and/or realistic. This is especially useful when you need to quickly add details to a scene or to create photo-realistic characters from low-resolution images.

Links and references

4. Model generation

The technology is moving so fast we are going a step beyond images: there are already proofs of concept to turn a picture from your phone into production-ready 3D assets.

- Automated asset creation - A production needs a lot of assets, and creating them is often a tedious and time-consuming process. AI can generate 3D models from images, enabling animators to focus more on adding details and polishing the final result.

- Character customization and variation - AI-driven 3D model generation facilitates character customization by automatically generating variations in appearance, clothing, and accessories.

- Procedural animation - For complex environments or large crowds, AI can generate diverse 3D models and animations procedurally at scale much more efficiently:

Links and references

5. Video generation

Enter a prompt and an optional image, and the AI will generate a video for you!

If you can generate images, you can also generate videos. But the main technical difficulty at the moment is to generate consistent frames at scale. The technology is still in its infancy, but it’s already possible to generate short videos with a few seconds of footage that can be used for storyboarding.

For example, you’ve perhaps seen viral clips of the Carrot Saga on Tiktok or YouTube, making millions of views:

Links and references

- Adobe Firefly

- Generative AI at Adobe (French)

- Stable Video Diffusion

- Pika Art

- The Carrot Saga (AI animation)

- Sora

6. Real-time rendering

Real-time rendering is the process of generating animation frames in milliseconds for direct display. Rendering is traditionally a computationally-expensive task, but AI-powered rendering can provide near-immediate results for a variety of tasks:

- Pre-visualization - Real-time rendering provides animators with immediate feedback on movement, expressions, and interactions to create more engaging characters and environments.

- Interactive storytelling - With real-time rendering, animation studios can create interactive narratives where user choices dynamically influence the storyline. AI algorithms contribute to rendering alternate scenes, characters, and outcomes, providing a more immersive experience for audiences.

- Collaborative prototyping - Real-time rendering is invaluable in the prototyping phase, enabling animators to quickly test different visual styles, lighting setups, and camera angles. Artists working on different aspects of a project can see immediate updates, fostering a more efficient collaborative workflow.

Links and references

- Real-Time AI Rendering with ComfyUI and 3ds Max

- D5 Render

- Real-time ray tracing by Nvidia

- Published papers on real-time rendering

7. Keyframe animation

Keyframe animation is a technique that involves creating a sequence of frames to define the start and end points of a movement. An AI tool like Cascadeur can save animators countless hours:

- Automated interpolation - AI-assisted interpolation is another method to generate the frames between keyframes. From a few poses, Cascadeur can generate realistic motion animations, including keyframes and secondary motion.

- Rig generation - Cascadeur can also auto-generate rigs for complex 3D models.

Links and references

8. Rotoscopic animation

Rotoscopic animation is a technique that involves tracing over live-action footage to create realistic animations. AI can assist animators in the rotoscoping process while providing a variety of benefits:

- Vtuber - Combined with real-time rendering, AI-assisted rotoscope animation can be used to create virtual avatars for live streaming or other video content.

- Automatic frame detection - AI algorithms can automatically detect key frames in live-action footage, streamlining the initial phase of the rotoscoping process. This reduces the manual effort required for frame-by-frame tracing.

- Tracing assistance - AI can assist animators by automating certain tracing tasks like outlining characters or objects.

Links and references

9. Image recognition

Image recognition is the process of identifying and classifying objects within images.

- Scene breakdown and analysis - AI algorithms can analyze complex scenes, automatically identifying and categorizing elements like characters, objects, backgrounds, and lighting conditions. This feature simplifies the scene breakdown process, providing a detailed analysis of each frame and facilitating a more efficient understanding of the visual components within a scene for faster reviews.

- Annotations - Combined with text generation tools, AI can automatically annotate storyboards with descriptions or notes to simplify the communication between different teams involved in the animation process, ensuring that everyone has a clear understanding of the intended visual and narrative elements in each preview frame.

- Facial recognition and expression analysis - Animators can leverage motion tracking for realistic animations. This is how Vtuber avatars implement lip-syncing or hand-syncing.

- Quality control and error detection - AI can be employed for quality control―automatically detecting anomalies, errors, or inconsistencies within images to ensure a higher level of accuracy in the animation process and help studios identify and rectify issues early in the production pipeline.

Links and references

10. Voice acting

AI-assisted voice acting involves generating or enhancing voice performances for animated characters or other audio content.

- Text-to-speech synthesis - AI can convert written text into spoken words with natural-sounding intonation and expression. Animation studios can use TTS for quick prototyping, generating placeholder voiceovers, or experimenting with dialogue variations before engaging human voice actors.

- Voice cloning and replication - AI can analyze and replicate a specific voice actor's style, tone, and nuances―effectively cloning voices. This feature is useful for maintaining consistency across projects or creating additional lines of dialogue without requiring the original voice actor's availability.

- Multilingual voice generation - AI-powered voice generation can produce speech in multiple languages, offering flexibility for global audiences: animation studios can easily localize content, ensuring that characters speak authentically in different languages without the need for extensive manual voice recording.

Links and references

Conclusion

AI is already transforming the animation industry, and it will continue to do so in the coming years. While it’s still in its early days, we can already see the potential of AI for animation studios, from concept art to rendering and distribution.

For animation artists, AI is a powerful tool to streamline the production process and allow for more creativity regardless of your initial skills.

It’s important to remember that AI is not a replacement for human creativity but another tool in the animator’s toolkit, providing new ways to express ideas and bring them to life: we can expect in the near future a new wave of one-person animation studios, but also more partnerships between studios, and of course more projects thanks to the decrease in labor costs.

Last but not least AI platforms will have to deal with author rights and find the right fit to be widely spread among productions. The art generation cannot thrive without the acknowledgment of artists. Once these aspects are cleared, creativity will benefit from this new technology for the pleasure of our eyes!

Make sure to join us on Discord if you want to discuss the future of creative pipelines or just want to hang out with 1000+ animation experts from all over the world!